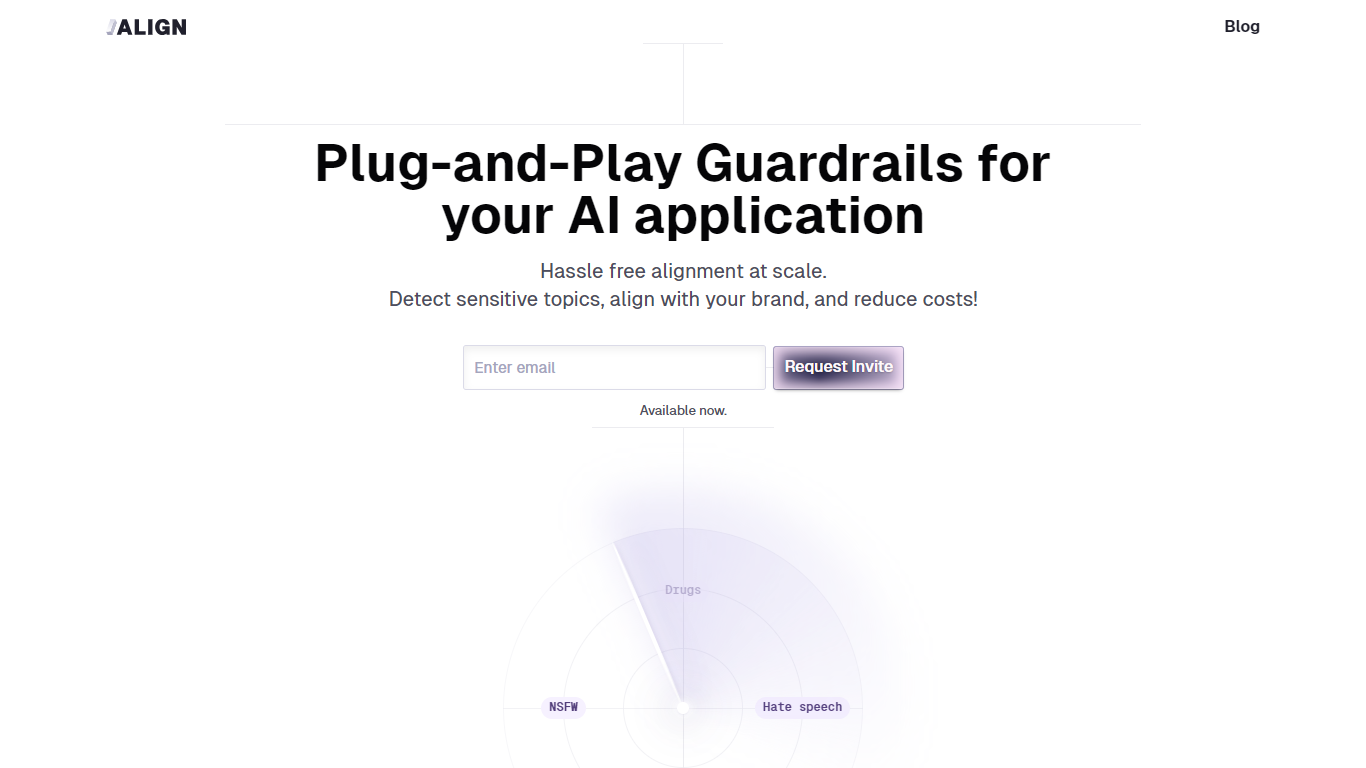

Align API

Align API offers advanced safeguards for AI applications, providing seamless alignment capabilities with impressive efficiency. The system is designed to ...

Align API offers advanced safeguards for AI applications, providing seamless alignment capabilities with impressive efficiency. The system is designed to ...

API-based safety checks

integrate alignment safeguards directly into your AI applications via API

Pre-built guardrails

access ready-made safety rules and alignment configurations for common use cases

Custom rule creation

define and implement your own safety parameters tailored to specific applications

Real-time monitoring

track and analyse how your AI systems behave against safety benchmarks

Integration support

connect with popular AI platforms and frameworks through documented endpoints

Adding safety checks to chatbot applications before they interact with users

Implementing content filters in generative AI systems

Ensuring AI models stay within defined ethical boundaries in production

Testing AI applications against known safety risks during development

Monitoring deployed models for unexpected or unsafe behaviour patterns