EvalsOne

EvalsOne is a comprehensive platform designed to optimize generative AI applications by providing tools for evaluating AI models, prompts, and workflows. It aids developers and researchers in improvin

EvalsOne is a comprehensive platform designed to optimize generative AI applications by providing tools for evaluating AI models, prompts, and workflows. It aids developers and researchers in improvin

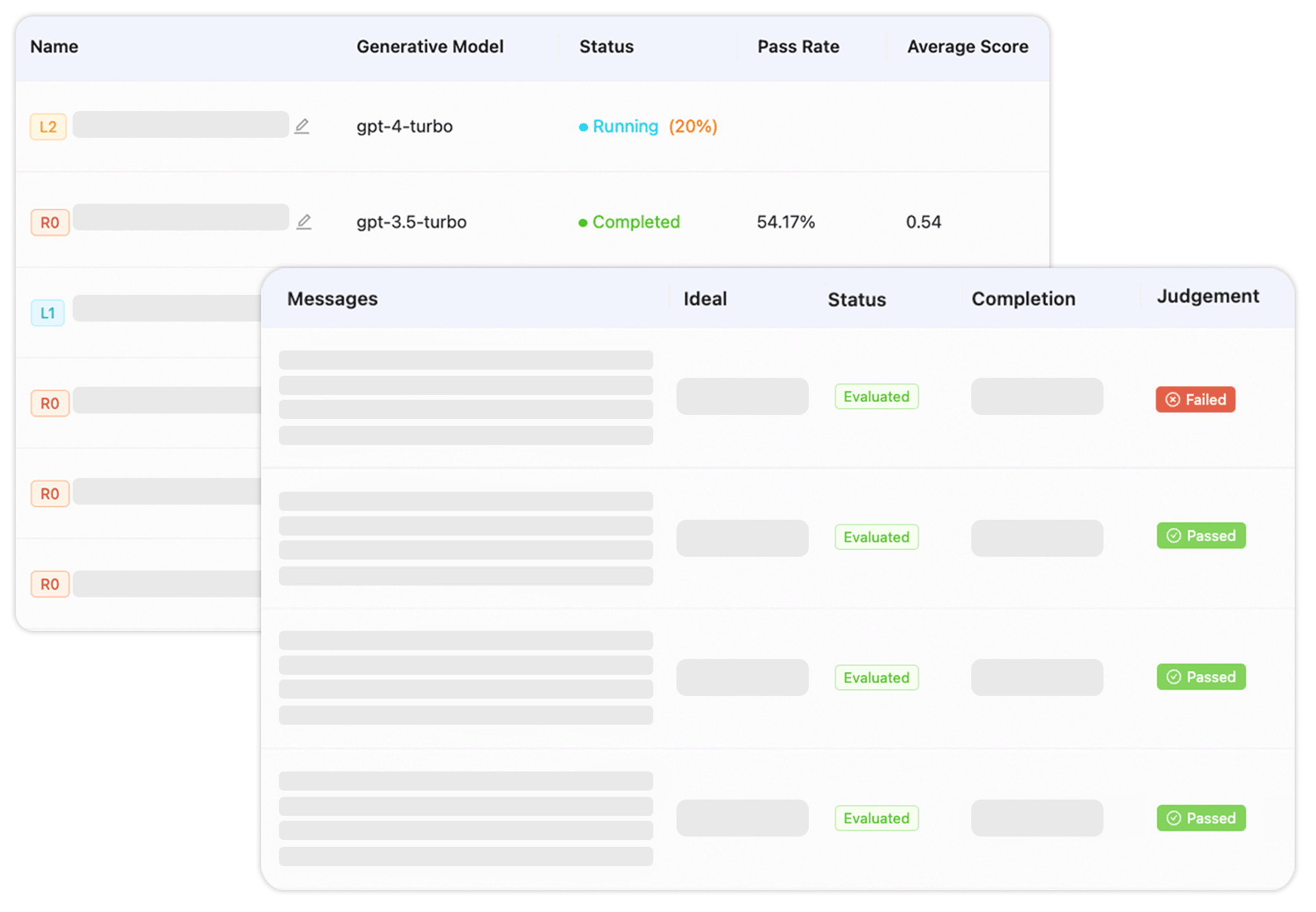

Model and prompt evaluation

Test different prompts and models against your data to see which performs better

A/B testing with Fork feature

Create variations of prompts or workflows and compare results side by side

Multi-provider integration

Connect to various cloud services, local models, orchestration tools, and AI APIs

Automated insights

Get analysis of test results without manually reviewing every output

Prompt refinement tools

Edit and improve prompts within the platform before moving to production

LLMOps workflow support

Cover testing needs from initial development through to live applications

Comparing different prompt variations to find the clearest instructions for your LLM

Testing a new model against your current one before switching in production

Running quality checks on chatbot or content generation workflows before release

Tracking performance metrics over time as you refine your AI application

Evaluating multiple API providers to choose the most reliable for your use case