jj

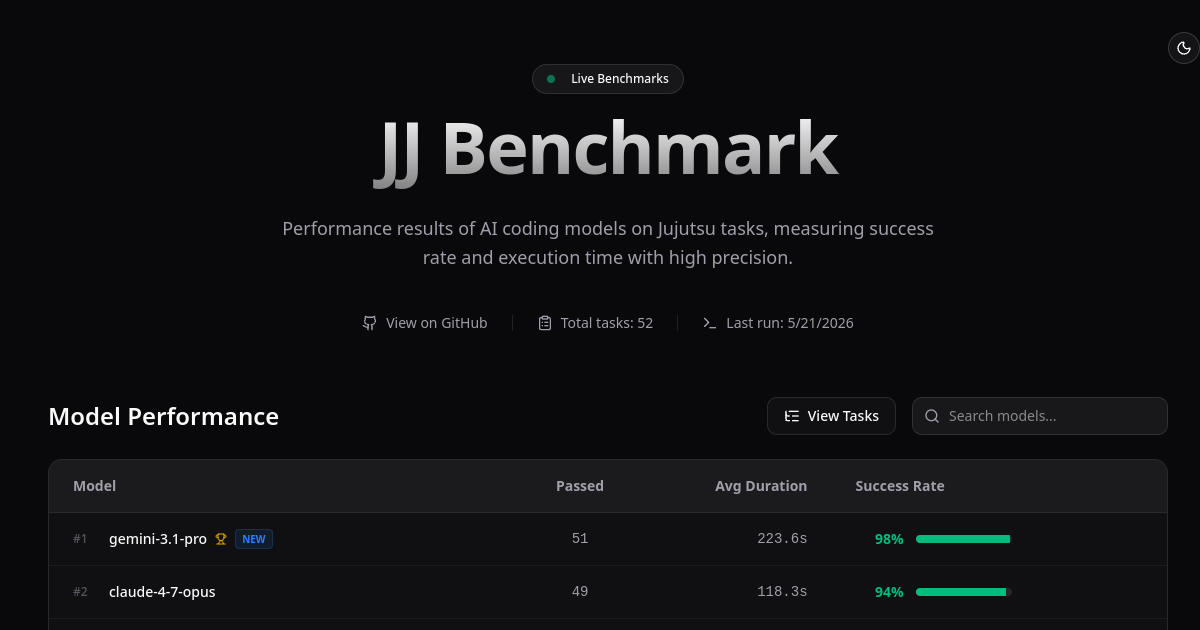

benchmark – Evaluating AI agents on Jujutsu version control

benchmark – Evaluating AI agents on Jujutsu version control

Performance metrics

Measures success rate and execution time for AI agents on version control tasks

Jujutsu compatibility

Specifically benchmarks tasks using the Jujutsu version control system rather than traditional Git

Multi-model evaluation

Compares performance across different AI coding models and agents

High-precision measurement

Provides detailed accuracy and timing data for task completion

Standardized testing

Offers consistent, reproducible benchmark scenarios for fair model comparison

Public results dashboard

Displays comparative performance data for transparency and accessibility

AI model developers evaluating their agents' version control capabilities on Jujutsu workflows

Teams comparing different AI coding assistants to select the best fit for Jujutsu-based repositories

Researchers studying AI agent performance on version control and software engineering tasks

Organizations migrating to Jujutsu seeking data on which AI tools work best with the system

Academic studies on AI coding capabilities in modern version control environments