Keywords AI

The enterprise-grade software to build, monitor, and improve your AI application. Keywords AI is a full-stack LLM engineering platform for developers and PMs.

The enterprise-grade software to build, monitor, and improve your AI application. Keywords AI is a full-stack LLM engineering platform for developers and PMs.

LLM gateway

Route requests to different language models through a single interface, with load balancing and fallback options

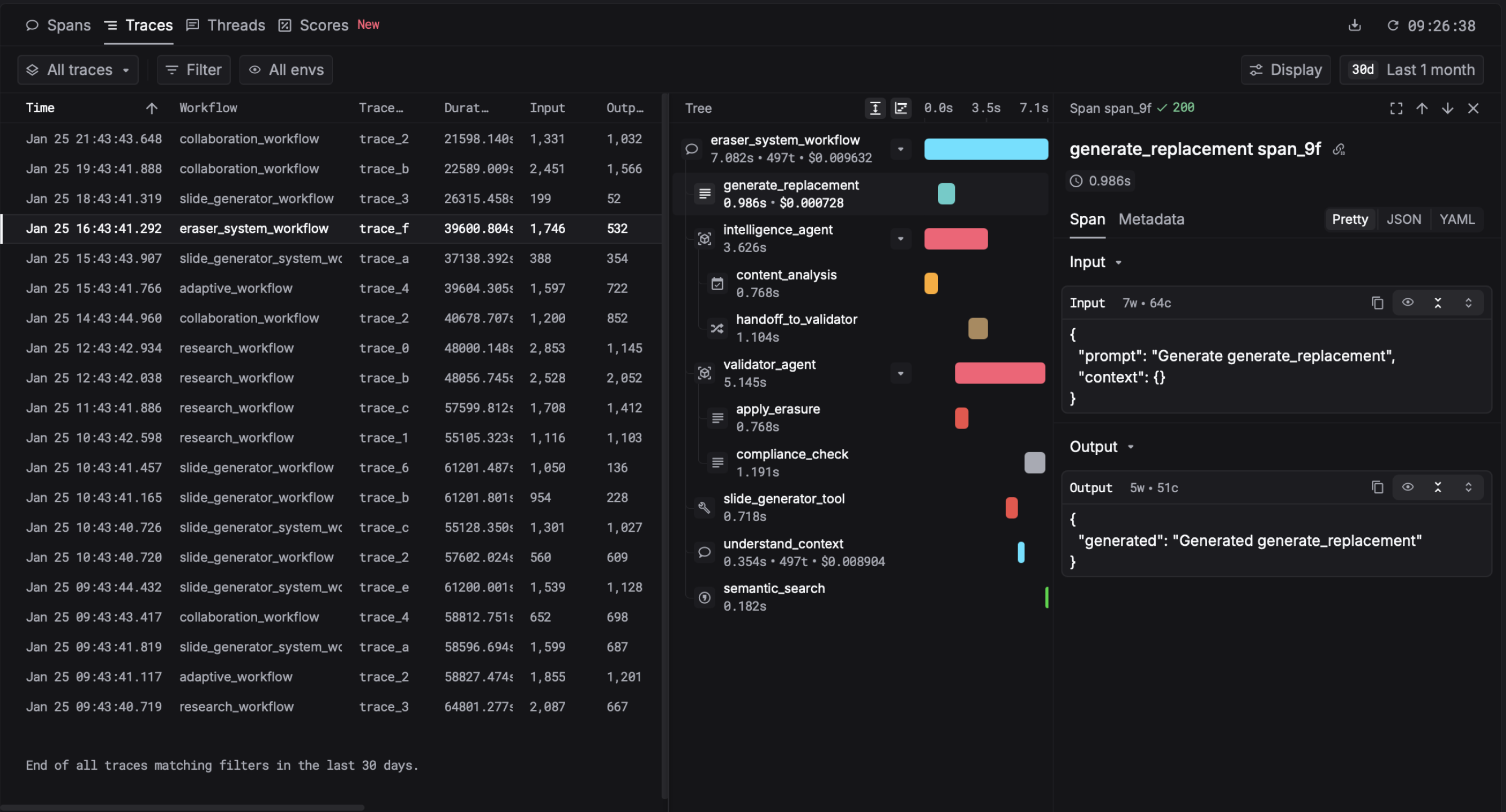

Observability

Track API calls, costs, latency, and token usage across all your LLM integrations

Prompt evaluation

Test and compare different prompts against your data to measure performance before deployment

Prompt optimisation

Iteratively improve prompts based on real-world performance data

Monitoring dashboard

View metrics and alerts to catch issues in production quickly

Monitoring costs and performance of LLM-powered customer support chatbots

A/B testing different prompts for content generation at scale

Tracking reliability metrics for AI features in production applications

Optimising latency and quality trade-offs when using multiple LLM providers

Debugging unexpected behaviour in deployed AI models