Keywords AI

The enterprise-grade software to build, monitor, and improve your AI application. Keywords AI is a full-stack LLM engineering platform for developers and PMs.

The enterprise-grade software to build, monitor, and improve your AI application. Keywords AI is a full-stack LLM engineering platform for developers and PMs.

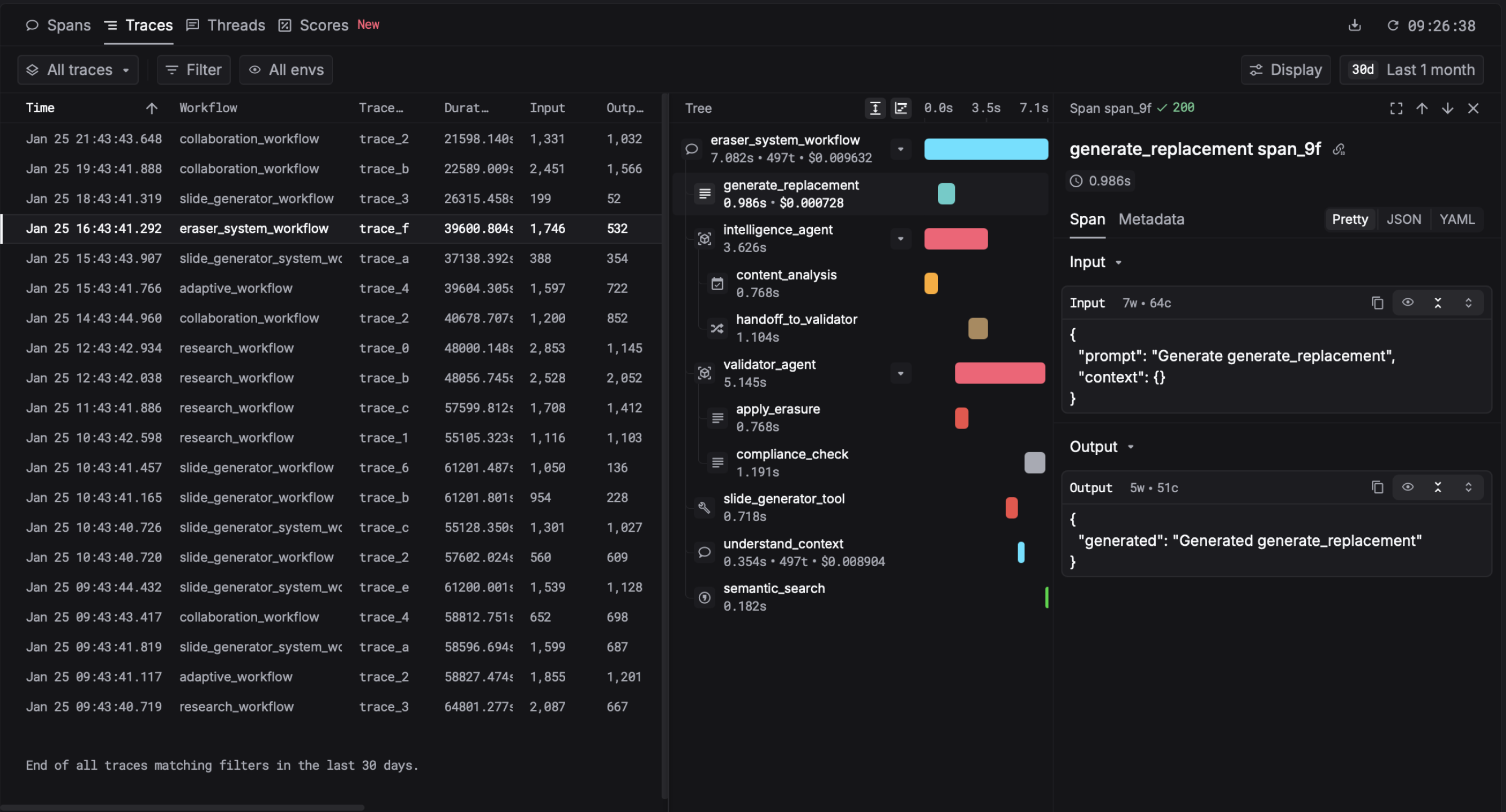

Observability and monitoring

Track how your LLM applications behave in production, including latency, costs, and error rates

Evaluation framework

Test and compare different prompts and models against your own benchmarks before deployment

Prompt optimisation

Iterate on prompts systematically with built-in tools to measure improvements

Unified LLM gateway

Route requests to different LLM providers through a single interface, making it easier to switch models or run A/B tests

Logs and analytics

Centrally store and analyse conversation logs to spot patterns and debug issues

Monitoring production LLM applications to catch performance degradation or cost overruns early

Running prompt experiments and A/B tests to find the best version before rolling out to users

Evaluating whether switching to a cheaper or faster LLM provider will affect application quality

Debugging unexpected behaviour in AI-powered features by reviewing logs and conversation history

Building a feedback loop where production data informs prompt and model improvements