Open dataset of real

world LLM performance on Apple Silicon

world LLM performance on Apple Silicon

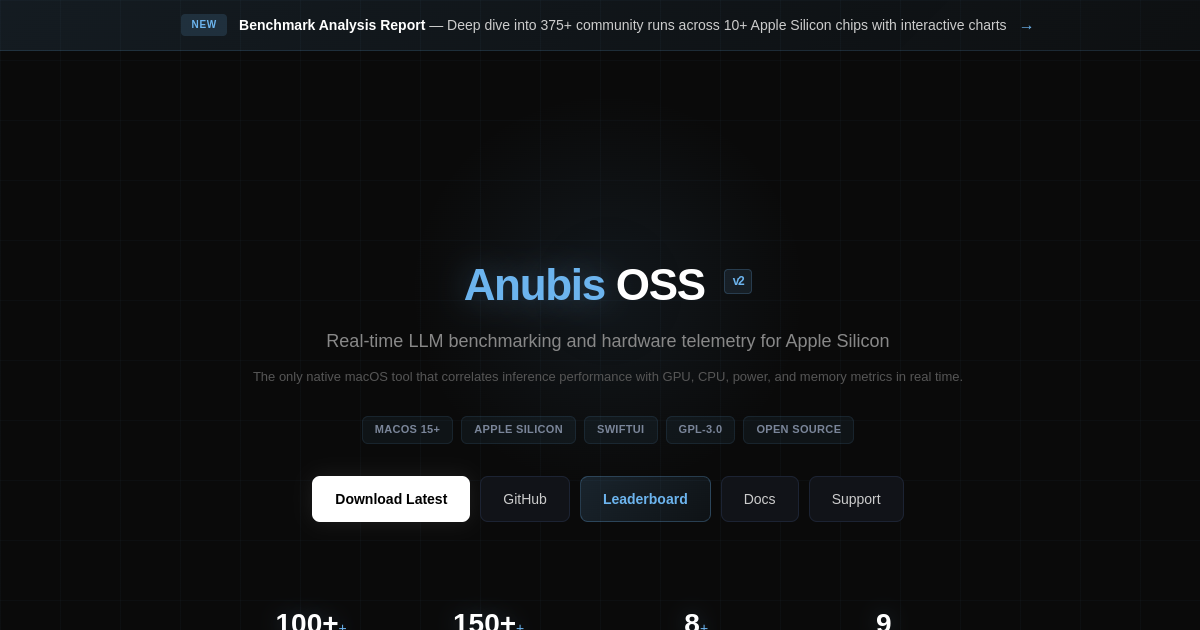

Open dataset of real LLM performance benchmarks on Apple Silicon processors

Performance comparison tools across different M-series chip generations

Model optimization recommendations for Apple Silicon architecture

Inference speed and resource consumption metrics (CPU, memory, power usage)

Community-contributed benchmark results and use case studies

Real-world performance data from diverse LLM architectures and sizes

Developers evaluating which LLM models to deploy on Mac applications

Researchers studying ARM-based chip performance for ML workloads

Mac users deciding whether to run language models locally versus via cloud APIs

ML engineers optimising inference pipelines for Apple Silicon devices

Software companies building AI features into macOS applications