Open Interpreter

Open Interpreter is an innovative platform that allows Large Language Models (LLMs) to execute code directly on your computer, enabling the automation and completion of various tasks. With 49,000 star

Open Interpreter is an innovative platform that allows Large Language Models (LLMs) to execute code directly on your computer, enabling the automation and completion of various tasks. With 49,000 star

Direct code execution

LLMs can write and run Python, JavaScript, and shell commands on your machine

Open source

Full codebase available on GitHub for transparency and community contributions

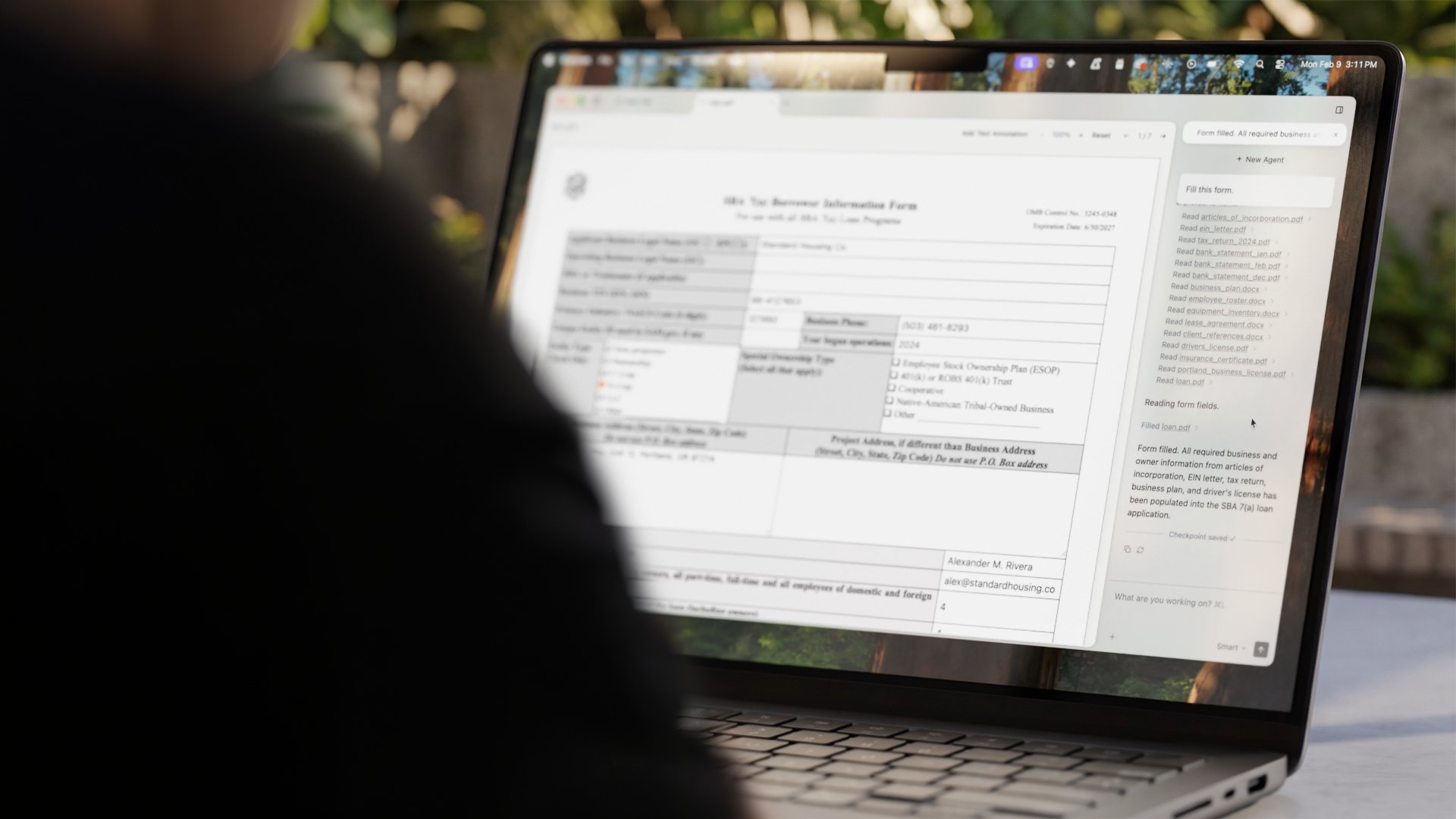

Desktop application

Graphical interface for those who prefer not to use the command line

Local and remote operation

Run on your own computer or integrate with hosted models

Task automation

Handle file operations, data analysis, web scraping, and other automated workflows

Multi-language support

Execute code across different programming languages

Automating repetitive data processing tasks without writing scripts yourself

Generating and processing files, images, or documents through natural language commands

Learning to code by watching an AI write and explain code in response to requests

Building custom automation workflows that combine multiple tools and APIs

Rapid prototyping of ideas that require code execution