Opik

Evaluate, test, and ship LLM applications with a suite of observability tools to calibrate language model outputs across your dev and production lifecycle.

Evaluate, test, and ship LLM applications with a suite of observability tools to calibrate language model outputs across your dev and production lifecycle.

LLM Evaluation Suite

thorough tools for testing language model outputs against custom criteria and benchmarks

Observability Across Lifecycle

Monitor and track model behaviour from development through production deployment

Output Calibration

Systematically evaluate and improve language model responses using metrics and scoring

Integration Support

Connect with popular LLM frameworks and development workflows via APIs

Comparative Analysis

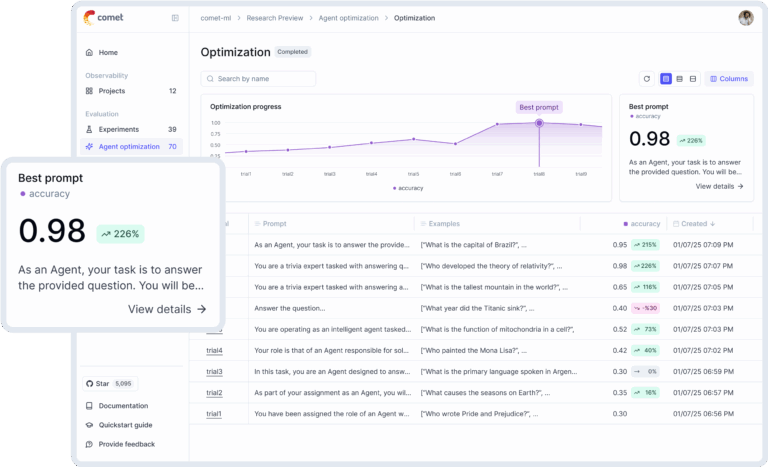

A/B test different models, prompts, and configurations to identify best performance

Quality Metrics

Built-in and customizable evaluation metrics to assess accuracy, safety, and user satisfaction

Pre-production evaluation: Systematically test LLM applications before shipping to ensure quality and safety

Prompt optimization: Compare different prompts and configurations to identify the best performing variants

Continuous monitoring: Track model performance in production and alert teams to quality degradation

Regulatory compliance: Maintain audit trails and evaluation records for compliance and governance

Model comparison: Evaluate different LLM providers or fine-tuned models to select the best option