Removal AI

Detect and remove offensive and undesired content with advanced filtering for maintaining content standards.

Detect and remove offensive and undesired content with advanced filtering for maintaining content standards.

Content detection

Automatically identifies offensive language, hate speech, and inappropriate material in text

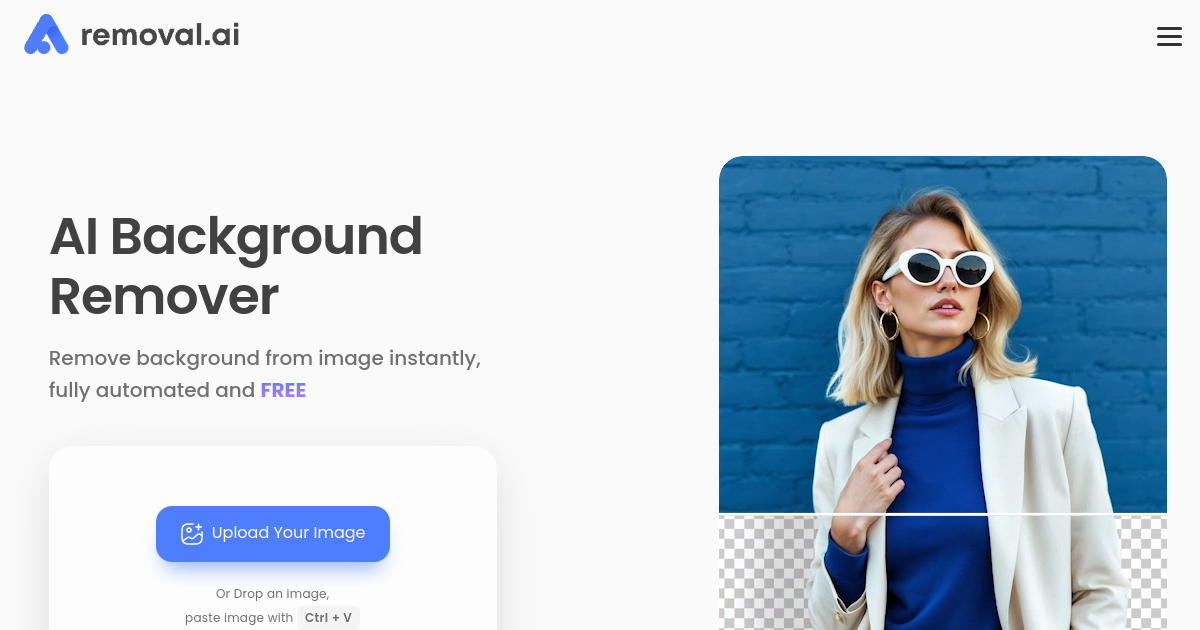

Image filtering

Scans images for undesired visual content

Customisable rules

Set your own standards for what gets flagged or removed based on your community guidelines

Batch processing

Filter multiple items at once rather than one by one

API integration

Connect the tool to your platform or workflow through an API

Reporting

Generate logs of flagged content for moderation review

Moderating user comments on community forums or social platforms

Filtering uploaded content in marketplace or sharing platforms

Pre-screening content in messaging or chat applications

Protecting brand safety by removing offensive material from branded content channels

Supporting compliance requirements for platforms that must maintain content standards